As AI-powered web crawlers run rampant, open-source developers are fighting back—with ingenuity, humor, and even a touch of vengeance.

Key Highlights

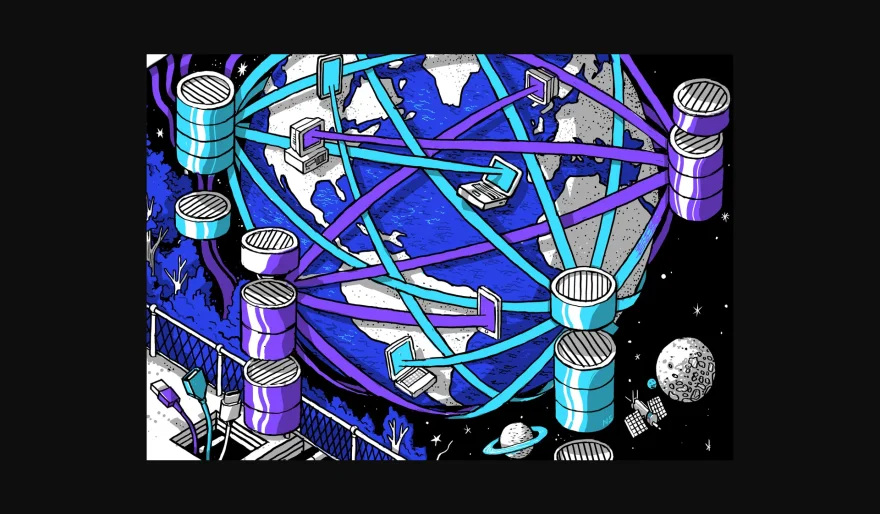

✅ AI Crawlers Overload Servers – Bots ignore "robots.txt" rules and hammer open-source sites, causing DDoS-like slowdowns.

✅ Anubis: The Ultimate Gatekeeper – Developer Xe Iaso created a proof-of-work challenge that blocks bots while letting humans through.

✅ Vengeful Traps – Tools like Nepenthes and Cloudflare’s AI Labyrinth lure and confuse AI crawlers with endless loops of fake data.

✅ Drastic Measures – Some devs have resorted to banning entire countries to fend off aggressive AI scraping.

Why It Matters

🚀 AI vs. Open Source Battle – Developers are pushing back as AI models scrape FOSS projects for training data without consent.

🔍 Clever vs. Malicious Defense – Tools like Anubis protect sites, while traps like Nepenthes actively poison AI datasets.

💡 A Call for Regulation? – Without clear AI crawling policies, more extreme countermeasures are emerging.

Will open-source devs outsmart AI crawlers, or is this just the beginning of a digital arms race?

AI Agents

AI Agents