Stay Ahead of the Curve

Latest AI news, expert analysis, bold opinions, and key trends — delivered to your inbox.

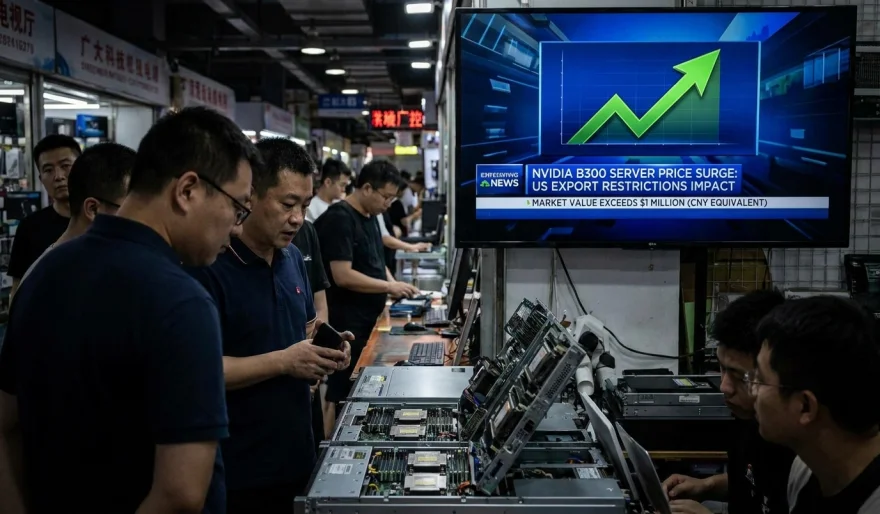

Nvidia B300 Servers Hit $1M in China as US Export Curbs Tighten

3 min read Nvidia’s B300 AI servers are reportedly selling for up to $1 million in China, nearly double their U.S. price, due to strict U.S. export restrictions and limited supply. The surge highlights how AI hardware has become a geopolitical asset, with access to advanced GPUs now shaping who can scale AI systems globally. May 08, 2026 10:36

Nvidia’s high-end AI servers built around the B300 chips are now reportedly selling for around $1 million each in China, according to sources cited by Reuters.

The same server costs roughly $550,000 in the U.S., but prices in China have nearly doubled due to strict U.S. export controls and a shrinking grey market supply.

What’s driving the spike

The surge isn’t about production costs — it’s about access. U.S. restrictions on advanced AI chips have limited direct shipments to China, while crackdowns on smuggling networks have further squeezed unofficial supply channels. That combination has created a severe scarcity premium for Chinese buyers trying to access top-tier AI compute.

At the same time, demand inside China is still exploding. AI companies there are racing to scale model training and inference workloads, and Nvidia hardware remains the gold standard despite restrictions.

The bigger picture

This price jump highlights a growing reality in the global AI race: compute is becoming geopolitically controlled infrastructure.

Instead of competing only on models, countries and companies are now competing on access to GPUs — the core engine behind modern AI systems. That’s turning Nvidia hardware into a strategic asset rather than just a commercial product.

Why it matters

If AI progress is fundamentally tied to compute, then restrictions like these don’t just affect pricing — they reshape who can build advanced AI at scale. China is being forced to rely more on domestic alternatives, while Nvidia remains central to global AI development, even in markets where it technically can’t operate freely.

In short: the AI boom is no longer just a tech story — it’s becoming a supply-chain and geopolitics story.

AI Agents

AI Agents