Stay Ahead of the Curve

Latest AI news, expert analysis, bold opinions, and key trends — delivered to your inbox.

Qwen Chat Adds Voice and Video: A New Era of AI Interaction

2 min read AI just got a voice—and a face. Qwen Chat now lets you talk and video chat with an AI in real time, powered by Alibaba’s new multimodal model. It’s like FaceTiming your assistant, and it might just change how we interact with machines forever. March 27, 2025 15:21

Qwen Chat just leveled up. As of March 27, 2025, you can now speak and video chat with the AI—transforming your interactions into something that feels less like typing commands and more like a real conversation.

The Details:

-

Voice and Video Chat: Users can now engage with the AI through real-time voice or video, similar to a phone or video call.

-

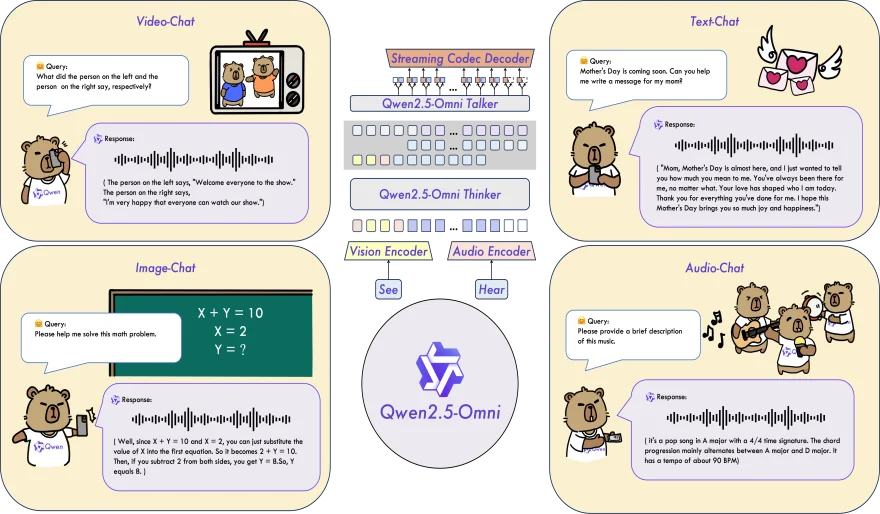

Powered by Qwen2.5-Omni-7B: This new open-source, multimodal model—developed by Alibaba Cloud—can process text, images, audio, and video inputs, and respond in both text and lifelike speech.

-

Multimodal Capabilities: In addition to real-time conversations, Qwen Chat still supports text-based chat, document processing, image understanding, video interpretation, and image generation.

-

Open Access: Qwen2.5-Omni-7B is available under the Apache 2.0 license, making it freely accessible to developers and AI enthusiasts.

-

How to Try It: Visit chat.qwen.ai, select the Qwen2.5-Omni-7B model, and start using voice or video. A live demo is also available to showcase the experience.

Why It Matters:

This update makes Qwen Chat one of the most versatile AI platforms available today. By adding voice and video, it closes the gap between human and machine communication. Whether you're a casual user, a creator, or a developer, this shift offers a more intuitive, natural way to engage with AI.

It also marks a major step toward multimodal AI as the new normal—and it’s only March. 2025 is already shaping up to be a game-changing year.

AI Agents

AI Agents