Stay Ahead of the Curve

Latest AI news, expert analysis, bold opinions, and key trends — delivered to your inbox.

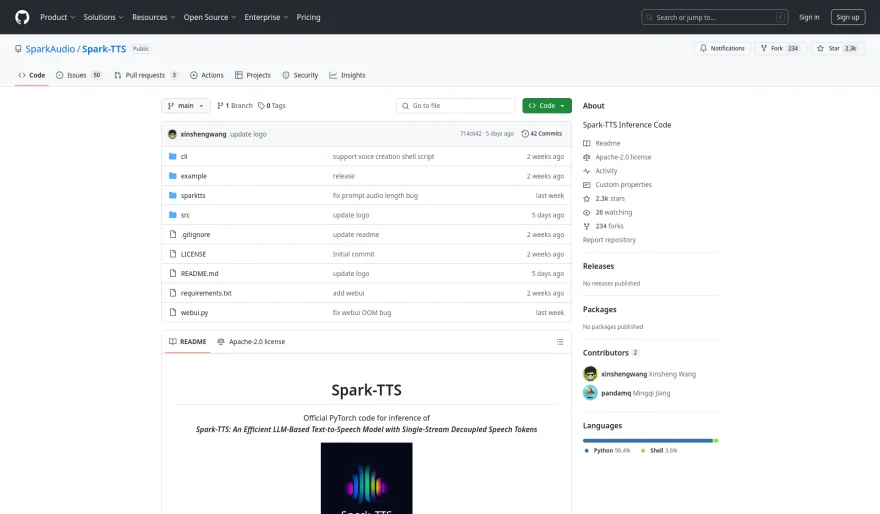

Spark-TTS: Revolutionizing Text-to-Speech with Cutting-Edge AI

5 min read "Spark-TTS is here, and it’s a game-changer. Powered by Qwen2.5, it offers zero-shot voice cloning, seamless language switching, and fine-grained voice control. Open-source, efficient, and packed with innovations like BiCodec and VoxBox. Check it out on GitHub and Hugging Face—what will YOU create with it?" March 10, 2025 04:13

Spark-TTS

is a state-of-the-art text-to-speech (TTS) system developed by

SparkAudio, powered by advanced large language models (LLMs). As of the

latest updates in March 2025, Spark-TTS sets a new standard for natural

and efficient speech synthesis. Here’s everything you need to know about

its groundbreaking features, recent developments, and practical

applications.

Key Features and Innovations.

Powered by Qwen2.5 LLM:

Spark-TTS is built entirely on the Qwen2.5 LLM, eliminating the need for additional generative models like flow matching. By directly reconstructing audio from LLM-predicted codes, the system simplifies the synthesis process while enhancing efficiency.BiCodec Technology:

Spark-TTS introduces BiCodec, a single-stream speech codec that splits speech into two token types:Low-bitrate semantic tokens for linguistic content.

Fixed-length global tokens for speaker attributes.

This innovative decoupling allows for both coarse-grained control (e.g., gender, speaking style) and fine-grained adjustments (e.g., pitch, speed).

Zero-Shot Voice Cloning:

Spark-TTS excels at replicating voices without prior training data, making it ideal for cross-lingual and code-switching applications. It seamlessly transitions between languages like English and Chinese while maintaining exceptional naturalness.VoxBox Dataset:

To support research and development, Spark-TTS includes VoxBox, a massive 100,000-hour dataset with detailed attribute annotations. This enables highly controllable and customizable TTS applications.

Recent Developments

Release Timeline:

As of early March 2025, SparkAudio has released the inference code, pre-trained models (e.g., Spark-TTS-0.5B), and audio samples on GitHub and Hugging Face. The Spark-TTS paper, published on arXiv on March 3, 2025 (arXiv:2503.01710), provides a detailed breakdown of its architecture and performance.Open-Source Availability:

Spark-TTS is fully open-sourced, targeting academic research, education, and legitimate applications like personalized speech synthesis and assistive technologies. While the inference tools and models are already available, the training code and VoxBox dataset are expected to follow soon.Performance:

Spark-TTS achieves state-of-the-art results in zero-shot voice cloning and customizable voice generation, outperforming traditional reference-based synthesis models in both quality and efficiency.

Practical Use

Inference:

Users can run Spark-TTS via a command-line interface (CLI) or a web UI. For example:CLI command:

python -m cli.inference --text "text to synthesis" --device 0 --model_dir pretrained_models/Spark-TTS-0.5BWeb UI: Launch with

python webui.py --device 0for voice cloning and creation.

Installation:

Spark-TTS requires Python 3.12, with dependencies installable viapip install -r requirements.txt. Pre-trained models can be downloaded from Hugging Face or cloned via Git.Demos:

Explore audio samples showcasing zero-shot cloning on the project’s demo page, accessible through the GitHub repository.

Current Sentiment

The release of Spark-TTS has sparked widespread excitement, particularly among researchers and developers. Posts on X (formerly Twitter) in early March 2025 highlight its efficiency, bilingual support, and unparalleled control over voice attributes. The open-source nature of the project has also been praised for enabling rapid prototyping in voice applications.

Availability

As of March 10, 2025, Spark-TTS is actively supported with resources on GitHub and Hugging Face. While the core inference tools and models are already available, keep an eye on SparkAudio’s repositories for the upcoming release of the training code and VoxBox dataset.

For the latest updates beyond this date, check out SparkAudio’s GitHub or the arXiv paper for real-time developments.

AI Agents

AI Agents