Stay Ahead of the Curve

Latest AI news, expert analysis, bold opinions, and key trends — delivered to your inbox.

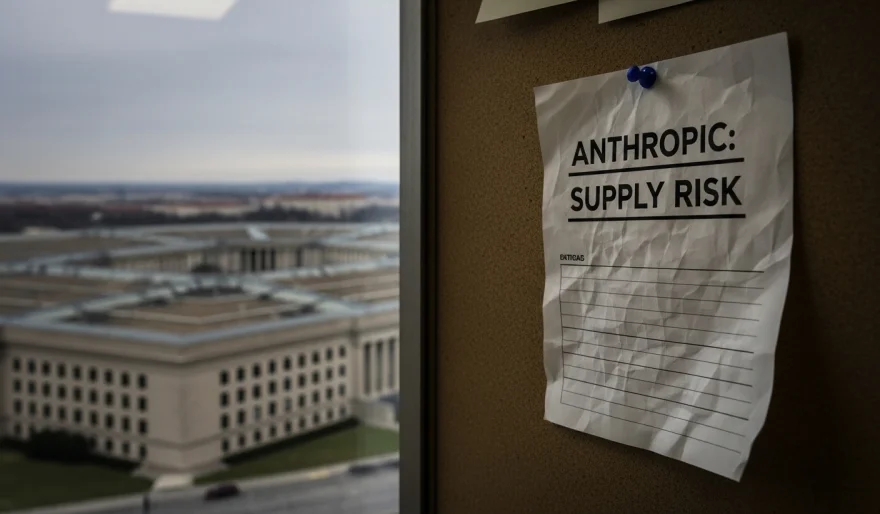

Trump Orders Agencies to Drop Anthropic After Pentagon Flags ‘Supply Risk’

3 min read President Trump has directed U.S. federal agencies to stop using AI tools from Anthropic after the United States Department of Defense labeled the company a potential “supply risk.” March 02, 2026 14:13

The White House has reportedly directed U.S. federal agencies to stop using AI systems from Anthropic after the Pentagon labeled the startup a potential “supply risk.”

The decision follows an internal assessment by the United States Department of Defense, which raised concerns about relying on a single AI vendor for critical government workflows. Officials fear that heavy dependence on one frontier AI provider could expose agencies to operational disruptions, geopolitical pressure, or compliance blind spots.

While Anthropic has positioned itself as a safety-focused alternative in the AI race — especially with its Claude models — the move signals a broader shift in Washington’s AI procurement strategy: diversify or risk vulnerability.

The directive could reshape how federal contracts are distributed among AI firms, potentially benefiting competitors like OpenAI and Google DeepMind as agencies reassess vendor concentration risk.

Why this matters:

AI is rapidly becoming embedded in defense logistics, intelligence analysis, and internal operations. If the Pentagon views a leading AI startup as a supply-chain liability, it suggests the government is now treating advanced AI models less like software — and more like strategic infrastructure.

Hot take: This isn’t just about Anthropic. It’s an early signal that AI vendors serving governments may soon be evaluated the same way defense contractors are — on resilience, geopolitical alignment, and long-term continuity, not just model performance.

AI Agents

AI Agents